() - . , , -. , - , , .

. - WebGL. . , JavaScript HTML .

, -. .

. , :

const emotions = [ "angry", "disgust", "fear", "happy", "neutral", "sad", "surprise" ];

let emotionModel = null;

async:

(async () => {

...

// Load Face Landmarks Detection

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

// Load Emotion Detection

emotionModel = await tf.loadLayersModel( 'web/model/facemo.json' );

...

})();

:

async function predictEmotion( points ) {

let result = tf.tidy( () => {

const xs = tf.stack( [ tf.tensor1d( points ) ] );

return emotionModel.predict( xs );

});

let prediction = await result.data();

result.dispose();

// Get the index of the maximum value

let id = prediction.indexOf( Math.max( ...prediction ) );

return emotions[ id ];

}

, trackFace

.

async function trackFace() {

...

let points = null;

faces.forEach( face => {

...

// Add just the nose, cheeks, eyes, eyebrows & mouth

const features = [

"noseTip",

"leftCheek",

"rightCheek",

"leftEyeLower1", "leftEyeUpper1",

"rightEyeLower1", "rightEyeUpper1",

"leftEyebrowLower", //"leftEyebrowUpper",

"rightEyebrowLower", //"rightEyebrowUpper",

"lipsLowerInner", //"lipsLowerOuter",

"lipsUpperInner", //"lipsUpperOuter",

];

points = [];

features.forEach( feature => {

face.annotations[ feature ].forEach( x => {

points.push( ( x[ 0 ] - x1 ) / bWidth );

points.push( ( x[ 1 ] - y1 ) / bHeight );

});

});

});

if( points ) {

let emotion = await predictEmotion( points );

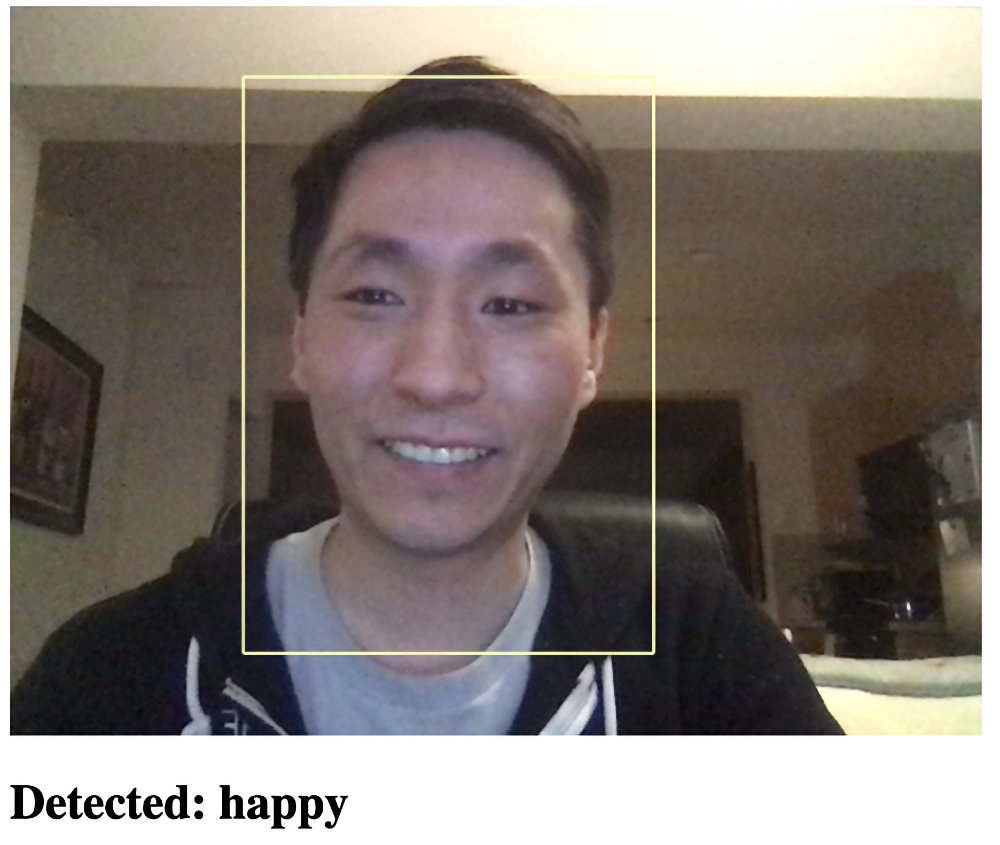

setText( `Detected: ${emotion}` );

}

else {

setText( "No Face" );

}

requestAnimationFrame( trackFace );

}

, . , -, . !

,

<html>

<head>

<title>Real-Time Facial Emotion Detection</title>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow/tfjs@2.4.0/dist/tf.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow-models/face-landmarks-detection@0.0.1/dist/face-landmarks-detection.js"></script>

</head>

<body>

<canvas id="output"></canvas>

<video id="webcam" playsinline style="

visibility: hidden;

width: auto;

height: auto;

">

</video>

<h1 id="status">Loading...</h1>

<script>

function setText( text ) {

document.getElementById( "status" ).innerText = text;

}

function drawLine( ctx, x1, y1, x2, y2 ) {

ctx.beginPath();

ctx.moveTo( x1, y1 );

ctx.lineTo( x2, y2 );

ctx.stroke();

}

async function setupWebcam() {

return new Promise( ( resolve, reject ) => {

const webcamElement = document.getElementById( "webcam" );

const navigatorAny = navigator;

navigator.getUserMedia = navigator.getUserMedia ||

navigatorAny.webkitGetUserMedia || navigatorAny.mozGetUserMedia ||

navigatorAny.msGetUserMedia;

if( navigator.getUserMedia ) {

navigator.getUserMedia( { video: true },

stream => {

webcamElement.srcObject = stream;

webcamElement.addEventListener( "loadeddata", resolve, false );

},

error => reject());

}

else {

reject();

}

});

}

const emotions = [ "angry", "disgust", "fear", "happy", "neutral", "sad", "surprise" ];

let emotionModel = null;

let output = null;

let model = null;

async function predictEmotion( points ) {

let result = tf.tidy( () => {

const xs = tf.stack( [ tf.tensor1d( points ) ] );

return emotionModel.predict( xs );

});

let prediction = await result.data();

result.dispose();

// Get the index of the maximum value

let id = prediction.indexOf( Math.max( ...prediction ) );

return emotions[ id ];

}

async function trackFace() {

const video = document.querySelector( "video" );

const faces = await model.estimateFaces( {

input: video,

returnTensors: false,

flipHorizontal: false,

});

output.drawImage(

video,

0, 0, video.width, video.height,

0, 0, video.width, video.height

);

let points = null;

faces.forEach( face => {

// Draw the bounding box

const x1 = face.boundingBox.topLeft[ 0 ];

const y1 = face.boundingBox.topLeft[ 1 ];

const x2 = face.boundingBox.bottomRight[ 0 ];

const y2 = face.boundingBox.bottomRight[ 1 ];

const bWidth = x2 - x1;

const bHeight = y2 - y1;

drawLine( output, x1, y1, x2, y1 );

drawLine( output, x2, y1, x2, y2 );

drawLine( output, x1, y2, x2, y2 );

drawLine( output, x1, y1, x1, y2 );

// Add just the nose, cheeks, eyes, eyebrows & mouth

const features = [

"noseTip",

"leftCheek",

"rightCheek",

"leftEyeLower1", "leftEyeUpper1",

"rightEyeLower1", "rightEyeUpper1",

"leftEyebrowLower", //"leftEyebrowUpper",

"rightEyebrowLower", //"rightEyebrowUpper",

"lipsLowerInner", //"lipsLowerOuter",

"lipsUpperInner", //"lipsUpperOuter",

];

points = [];

features.forEach( feature => {

face.annotations[ feature ].forEach( x => {

points.push( ( x[ 0 ] - x1 ) / bWidth );

points.push( ( x[ 1 ] - y1 ) / bHeight );

});

});

});

if( points ) {

let emotion = await predictEmotion( points );

setText( `Detected: ${emotion}` );

}

else {

setText( "No Face" );

}

requestAnimationFrame( trackFace );

}

(async () => {

await setupWebcam();

const video = document.getElementById( "webcam" );

video.play();

let videoWidth = video.videoWidth;

let videoHeight = video.videoHeight;

video.width = videoWidth;

video.height = videoHeight;

let canvas = document.getElementById( "output" );

canvas.width = video.width;

canvas.height = video.height;

output = canvas.getContext( "2d" );

output.translate( canvas.width, 0 );

output.scale( -1, 1 ); // Mirror cam

output.fillStyle = "#fdffb6";

output.strokeStyle = "#fdffb6";

output.lineWidth = 2;

// Load Face Landmarks Detection

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

// Load Emotion Detection

emotionModel = await tf.loadLayersModel( 'web/model/facemo.json' );

setText( "Loaded!" );

trackFace();

})();

</script>

</body>

</html>

? ?

, , JavaScript. , TensorFlow.js! – Snapchat, , 3D- ThreeJS. ! , !

, Level Up , - SkillFactory 40% HABR, +10% .